Why it’s impossible to build an unbiased AI language model

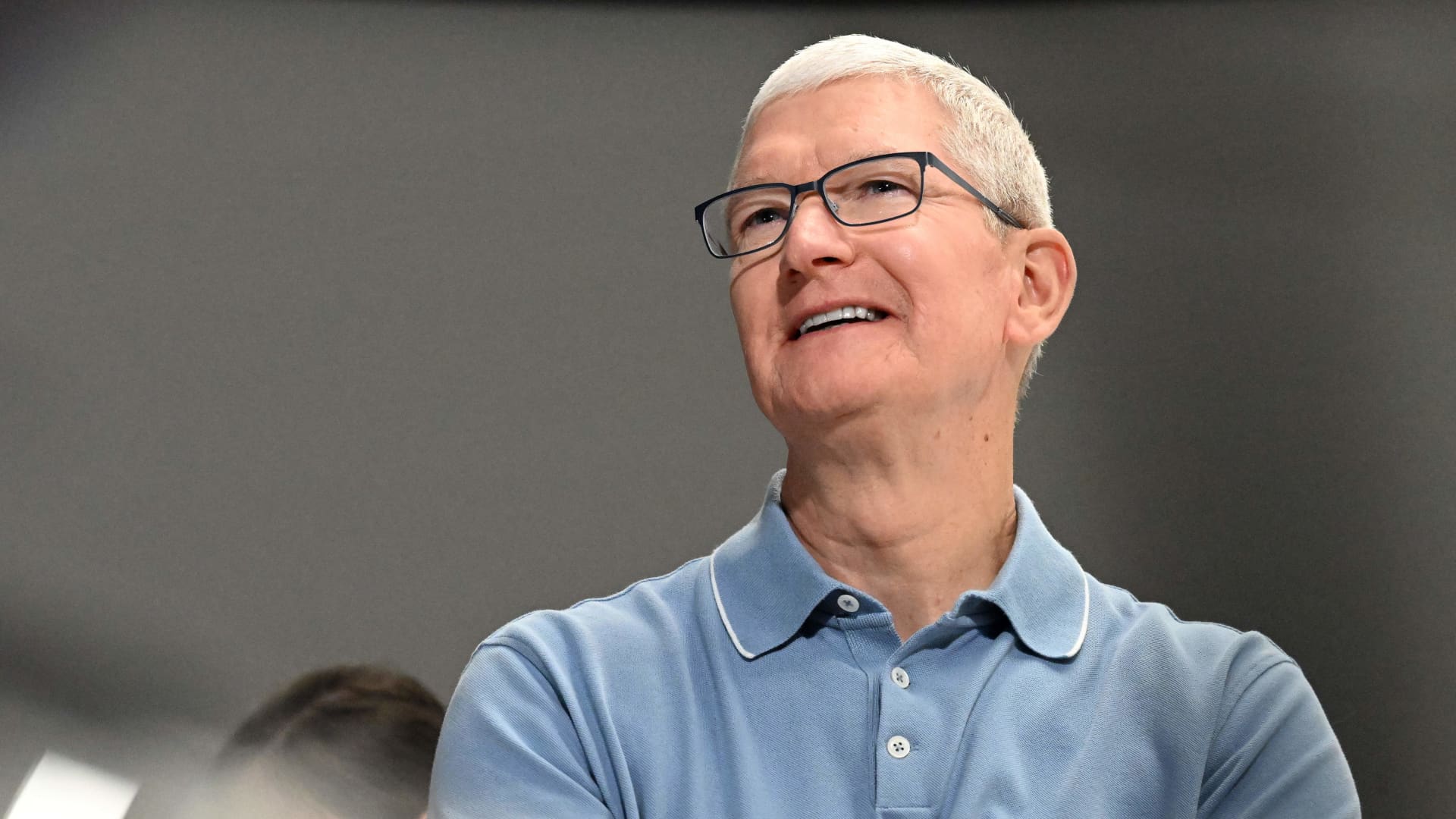

An unbiased, purely fact-based AI chatbot is a cute idea, but it’s technically impossible. (Musk has yet to share any details of what his TruthGPT would entail, probably because he is too busy thinking about X and cage fights with Mark Zuckerberg.) To understand why, it’s worth reading a story I just published on new research that sheds light on how political bias creeps into AI language systems. Researchers conducted tests on 14 large language models and found that OpenAI’s ChatGPT and GPT-4 were the most left-wing libertarian, while Meta’s LLaMA was the most right-wing authoritarian.

“We believe no language model can be entirely free from political biases,” Chan Park, a PhD researcher at Carnegie Mellon University, who was part of the study, told me. Read more here.

One of the most pervasive myths around AI is that the technology is neutral and unbiased. This is a dangerous narrative to push, and it will only exacerbate the problem of humans’ tendency to trust computers, even when the computers are wrong. In fact, AI language models reflect not only the biases in their training data, but also the biases of people who created them and trained them.

And while it is well known that the data that goes into training AI models is a huge source of these biases, the research I wrote about shows how bias creeps in at virtually every stage of model development, says Soroush Vosoughi, an assistant professor of computer science at Dartmouth College, who was not part of the study.

Bias in AI language models is a particularly hard problem to fix, because we don’t really understand how they generate the things they do, and our processes for mitigating bias are not perfect. That in turn is partly because biases are complicated social problems with no easy technical fix.

That’s why I’m a firm believer in honesty as the best policy. Research like this could encourage companies to track and chart the political biases in their models and be more forthright with their customers. They could, for example, explicitly state the known biases so users can take the models’ outputs with a grain of salt.

In that vein, earlier this year OpenAI told me it is developing customized chatbots that are able to represent different politics and worldviews. One approach would be allowing people to personalize their AI chatbots. This is something Vosoughi’s research has focused on.

As described in a peer-reviewed paper, Vosoughi and his colleagues created a method similar to a YouTube recommendation algorithm, but for generative models. They use reinforcement learning to guide an AI language model’s outputs so as to generate certain political ideologies or remove hate speech.